What Should We Learn from Efficient Processing of Deep Neural Networks

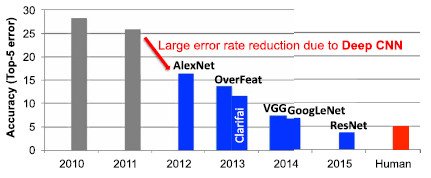

Neural networks are a set of biologically inspired algorithms that can be used to recognize patterns. Deep neural networks (DNNs) are neural networks that have much more layers in depth than traditional neural networks. DNNs are thus capable of learning high-level features with more complexity and abstraction than shallower neural networks. The use of DNNs has seen explosive growth in the past few years. Currently, DNNs are widely used for many artificial intelligence (AI) applications including computer vision, speech recognition, and robotics. While DNNs deliver state-of-the-art accuracy on many AI tasks, it comes at the cost of high computational complexity. Accordingly, techniques that enable efficient processing of DNNs to improve energy efficiency and throughput without sacrificing application accuracy or increasing hardware cost are critical to the wide deployment of DNNs in AI systems. The article Efficient Processing of Deep Neural Networks: A Tutorial and Survey by V. Sze, Y.-H. Chen, T.-J. Yang, and J. S. Emer published in Proceedings of the IEEE in December, 2017, provides a comprehensive tutorial and survey coverage of the recent advances toward enabling efficient processing of DNNs.

The authors provide an overview of DNNs before discussing various hardware platforms and architectures that support DNNs, and highlighting key trends in reducing the computation cost of DNNs through various techniques such as hardware design changes or via joint hardware design and DNN algorithm changes. The article also summarizes various development resources that will enable the reader to quickly get started in this field, and highlights important benchmarking metrics and design considerations that should be used for evaluating the rapidly growing number of DNN hardware designs, optionally including algorithmic codesigns, being proposed in academia and industry. The article concludes with recent implementation trends and opportunities.