SPS Feed

Top Reasons to Join SPS Today!

1. IEEE Signal Processing Magazine

2. Signal Processing Digital Library*

3. Inside Signal Processing Newsletter

4. SPS Resource Center

5. Career advancement & recognition

6. Discounts on conferences and publications

7. Professional networking

8. Communities for students, young professionals, and women

9. Volunteer opportunities

10. Coming soon! PDH/CEU credits

Click here to learn more.

The Latest News, Articles, and Events in Signal Processing

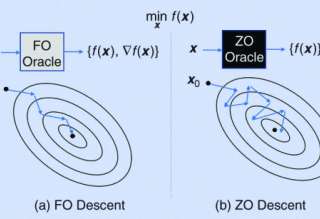

Zeroth-order (ZO) optimization is a subset of gradient-free optimization that emerges in many signal processing and machine learning (ML) applications. It is used for solving optimization problems similarly to gradient-based methods. However, it does not require the gradient, using only function evaluations. Specifically, ZO optimization iteratively performs three major steps: gradient estimation, descent direction computation, and the solution update. In this article, we provide a comprehensive review of ZO optimization, with an emphasis on showing the underlying intuition, optimization principles, and recent advances in convergence analysis.

Optimization lies at the heart of machine learning (ML) and signal processing (SP). Contemporary approaches based on the stochastic gradient (SG) method are nonadaptive in the sense that their implementation employs prescribed parameter values that need to be tuned for each application. This article summarizes recent research and motivates future work on adaptive stochastic optimization methods, which have the potential to offer significant computational savings when training largescale systems.

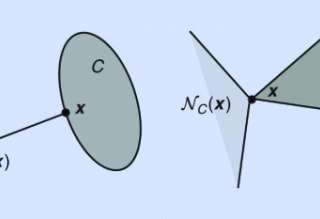

Many contemporary applications in signal processing and machine learning give rise to structured nonconvex nonsmooth optimization problems that can often be tackled by simple iterative methods quite effectively. One of the keys to understanding such a phenomenon-and, in fact, a very difficult conundrum even for experts-lies in the study of "stationary points" of the problem in question. Unlike smooth optimization, for which the definition of a stationary point is rather standard, there are myriad definitions of stationarity in nonsmooth optimization.

The articles in this special section focus on nonconvex optimization for signal processing and machine learning. Optimization is now widely recognized as an indispensable tool in signal processing (SP) and machine learning (ML). Indeed, many of the advances in these fields rely crucially on the formulation of suitable optimization models and deployment of efficient numerical optimization algorithms. In the early 2000s, there was a heavy focus on the use of convex optimization techniques to tackle SP and ML applications.

We are looking to hire a post-doctoral research fellow with strong background in signal processing and machine learning in a project relating graph signal processing, information processing/fusion and machine learning.

Job Description

- Digitization is an important means to preserve the content of materials which are basically vulnerable to physical damages. In particular, paper based (and especially historical) documents account for an invaluable source of information. The goal of this PhD project is to develop machine learning tools for analyzing scans of documents.

Manuscript Due: August 31, 2021

Publication Date: April 2022

CFP Document

Signal processing and communication students? We are looking for you!

The Signal Acquisition, Modeling, Processing, and Learning (SAMPL) lab headed by prof. Yonina Eldar is recruiting Masters and PhD students for cutting edge research in radar, communications, and analog-to-digital conversion.

Reykjavík University’s Language and Voice Lab (https://lvl.ru.is) is looking for experts in speech recognition and in speech synthesis. At the LVL you will be joining a research team working on exciting developments in language technology as a part of the Icelandic Language Technology Programme (https://arxiv.org/pdf/2003.09244.pdf).

Job Duties:

Manuscript Due: August 15, 2021

Publication Date: Midyear 2022

CFP Document

Pages

SPS Social Media

- IEEE SPS Facebook Page https://www.facebook.com/ieeeSPS

- IEEE SPS X Page https://x.com/IEEEsps

- IEEE SPS Instagram Page https://www.instagram.com/ieeesps/?hl=en

- IEEE SPS LinkedIn Page https://www.linkedin.com/company/ieeesps/

- IEEE SPS YouTube Channel https://www.youtube.com/ieeeSPS