What Should We Learn? For Faster AI, Mix Memory and Processing

If John von Neumann were designing a computer today, there’s no way he would build a thick wall between processing and memory. At least, that’s what computer engineer Naresh Shanbhag of the University of Illinois at Urbana-Champaign believes. The eponymous von Neumann architecture was published in 1945. It enabled the first stored-memory, reprogrammable computers—and it’s been the backbone of the industry ever since.

Now, Shanbhag thinks it’s time to switch to a design that’s better suited for today’s data-intensive tasks. In February, at the International Solid-State Circuits Conference (ISSCC), in San Francisco, he and others made their case for a new architecture that brings computing and memory closer together. The idea is not to replace the processor altogether but to add new functions to the memory that will make devices smarter without requiring more power.

Industry must adopt such designs, these e ngineers believe, in order to bring artificial intelligence out of the cloud and into consumer electronics. Consider a simple problem like determining whether or not your grandma is in a photo. Artificial intelligence built with deep neural networks excels at such tasks: A computer compares her photo with the image in question and determines whether they are similar—usually by performing some simple arithmetic. So simple, in fact, that moving the image data from stored memory to the processor takes 10 to 100 times as much energy as running the computation.

ngineers believe, in order to bring artificial intelligence out of the cloud and into consumer electronics. Consider a simple problem like determining whether or not your grandma is in a photo. Artificial intelligence built with deep neural networks excels at such tasks: A computer compares her photo with the image in question and determines whether they are similar—usually by performing some simple arithmetic. So simple, in fact, that moving the image data from stored memory to the processor takes 10 to 100 times as much energy as running the computation.

That’s the case for most artificial intelligence that runs on von Neumann architecture today. As a result, artificial intelligence is power hungry, neural networks are stuck in data centers, and computing is a major drain for new technologies such as self-driving cars.

“The world is gradually realizing it needs to get out of this mess,” says Subhasish Mitra, an electrical engineer at Stanford University. “Compute has to come close to memory. The question is, how close?”

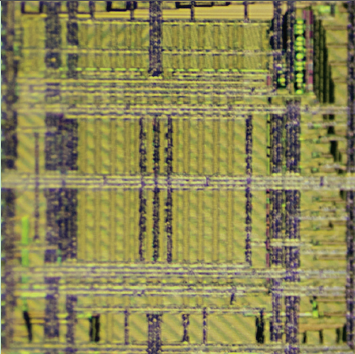

Mitra’s group uses an unusual architecture and new materials, layering carbon-nanotube integrated circuits on top of resistive RAM—much closer than when they’re built on separate chips. In a demo at ISSCC, their system could efficiently classify the language of a sentence.

Shanbhag’s group and others at ISSCC stuck with existing materials, using the analog control circuits that surround arrays of memory cells in new ways. Instead of sending data out to the processor, they program these analog circuits to run simple artificial intelligence algorithms. They call this design “deep in-memory architecture.”

Shanbhag doesn’t want to break up memory subarrays with processing circuits, because that would reduce storage density. He thinks that doing processing at the edges of subarrays is deep enough to get an energy and speed advantage without losing storage. Shanbhag’s group found a tenfold improvement in energy efficiency and a fivefold improvement in speed when using analog circuits to detect faces in images stored in static RAM.

Mitra says it’s still unclear whether in-memory computing can provide a large enough benefit to topple existing architectures. He thinks its energy and speed must improve by 1,000 times to convince semiconductor companies, circuit designers, and programmers to make big changes.

Startups could lead the charge, says Meng-Fan Chang, an electrical engineer at the National Tsing Hua University, in Taiwan. A handful of startups, including Texas-based Mythic, are developing a similar technology to build dedicated AI circuits. “There is an opportunity to tap into smaller markets,” says Chang.

For Faster AI, Mix Memory and Processing: New computing architectures aim to bring machine learning to more devices

KatherineBourzac. For Faster AI, Mix Memory and Processing: New computing architectures aim to bring machine learning to more devices. Spectrum, April, 2018, pp. 12-13