What Should We Learn? Signal Processing Improves Autonomous Vehicle Navigation Accuracy

Autonomous vehicles (AVs) are appearing everywhere: in the air, on the road, and even underground and underwater. Signal processing is helping to guide these vehicles more accurately, which ensures their safety and also protects the people who encounter them.

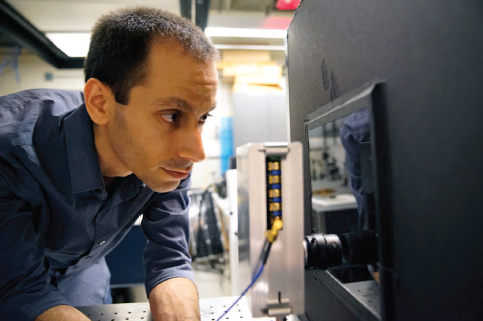

At the Massachusetts Institute of Technology (MIT) in Cambridge, researchers have created an imaging system that can generate accurate images of objects that are enveloped by a fog so dense that human vision cannot see into it. The system can also be used to gauge an object’s distance. Foggy driving environments have been a key obstacle in developing terrestrial AV navigation technologies that rely on visible light. However, under normal operating conditions, such systems are generally preferable to radar based technologies, both for their high resolution and their ability to read road signs and track lanes.

Engineers at Nanyang Technological University (NTU) in Singapore believe that the ultrafast high-contrast camera they developed will help both terrestrial and aerial AVs to navigate more precisely and safely. Unlike conventional optical cameras, which generally do not work well in darkness and are sometimes blinded by a flash of bright light, NTU’s smart camera can record even the slightest movements and records objects in real time under a wide array of lighting conditions. Inspired by human vision, the sensor does not use a conventional fixed frame rate to scan and check pixels. Instead, the concept relies on each pixel individually reporting whether it has perceived a change.

Getting AVs to fly around complicated obstacles in the dark safely is a challenging assignment, yet bats can accomplish this task with ease. For the past several years, University of Cincinnati researchers have been developing AVs that rely on fuzzy logic and other forms of artificial intelligence for navigation. The inspired techniques by bats’ natural flying skills are expected to improve drone navigation and autonomy. Currently, aerial AVs can be guided manually using line of sight, via video cameras or by GPS technology or lidar.

More detailed about the topic on Guidance innovations promise safer and more reliable autonomous vehicle operation by John Edwards is published in IEEE Signal Processing Magazine in March, 2019.