Visible Light Communication for Next Generation Wireless High Fidelity Virtual Reality Systems

Visible Light Communication for Next Generation Wireless High Fidelity Virtual Reality Systems

Mahmudur Khan and Jacob Chakareski

Virtual and augmented (VR/AR) systems have recently become exceedingly popular by enabling new immersive digital experiences. The application areas of VR/AR are not only limited to gaming and entertainment, but also education and training, environmental and weather sciences, disaster relief, and healthcare. The tremendous potential of VR/AR has resulted in accelerated market growth, expected to reach $120 billion by the year 2022. However, high quality VR systems like Oculus Rift and HTC Vive render the rich graphics content on a powerful computer. They require HDMI and USB cable connections between the computer and the VR-headset for streaming the multi-Gbps data. These cables limit the VR user's mobility and deteriorate his quality of experience. Standalone VR systems like Samsung Gear or Google Daydream render the graphics contents on the VR-headset or on a smart phone. Although they are wireless, the rendering quality is limited by the capability of the headset or the phone. Conventional wireless technologies, such as WiFi, cannot provide the high data rates required by high fidelity VR. Streaming 360$^\circ$ VR videos over them is even more constraining, due to the even higher data rate demand of such real remote scene multi-perspective immersion content. Hence, academic and industry researchers are considering alternative wireless technologies, e.g., mmWave, to support multi-Gbps data rates. Recently, HTC introduced a wireless adapter operating at 60 GHz for their VR-headset, but the quality of the streamed graphic content is not at par compared to those streamed via a tethered link. However, in the near future, the VR systems will render life-like experiences and the required data transfer rate will exceed 1 Tbps. Supporting such high throughput wireless links will be extremely challenging even for mmWave systems. Thus, researchers are exploring the use of visible light communication (VLC) to enable high speed wireless VR systems.

In the recent years, VLC has attracted significant research interest due to the development of light-emitting-diode or LED technology which can provide both illumination and high speed wireless data transfer simultaneously. LEDs are anticipated to dominate the illumination market by 2020. Since VLC allows both illumination and communication at the same time, it has attracted several indoor and outdoor applications such as lighting infrastructure in buildings with building-to-building wireless communication, high speed data transfer between autonomous cars, high speed communication inside offices, houses, airplane cabins, and even in VR arenas. In a recent work [1], researchers at The University of Alabama proposed the design of a VLC-enabled indoor VR-arena equipped with multiple LEDs or laser diodes (LDs) as optical transmitters which are connected to a VR-content server through fiber optic cables (Figure 1).

Figure 1 - VLC-enabled VR-arena. Pointed optical transmitters provide high-quality 360 VR videos. Omnidirectional Wi-Fi link provides baseline quality video and control channel.

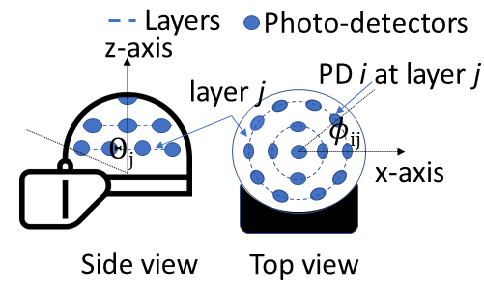

Each optical transmitter creates a small cell or an optical attocell. Thus, an attocell network is established that helps provide coverage in the whole VR-arena both in terms of illumination and high speed data transfer. Each VR-user is served by one of the transmitters and multiple users can be served by the same transmitter simultaneously. As the user moves within the arena he/she can be switched to other transmitters for service depending on his/her location. They also present a VR-headset design that has a hemispherical helmet/cap and is equipped with multiple photo-detectors (PDs) placed over the cap's surface (Figure 2).

Figure 2 – VR-headset equipped with photo-detectors.

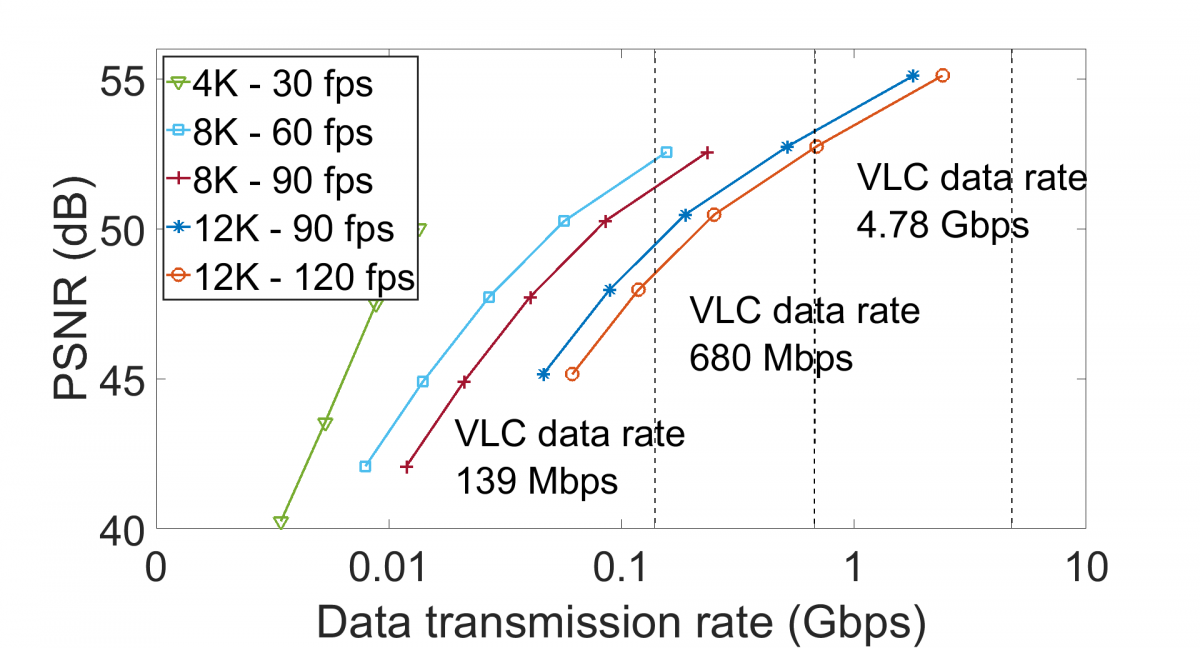

The proposed multi-detector design helps maintain wireless connectivity during random headset orientation caused by the VR-user's head movement. State-of-the-art tethered VR systems can stream 4k-30fps videos at reasonable quality. Streaming high quality 8K and 12K VR videos is presently not achievable even over the conventional Internet let alone wireless systems. A VLC-based VR-arena and VR-headset design can help achieve such high fidelity streaming at multiple Gbps (Figure 3). Moreover, integrated with recent advances in viewport-adaptive 360o video streaming [2, 3], they can lead to even higher system efficiencies and quality of experience benefits.

Figure 3 – High fidelity 360 degree VR videos can be streamed with the proposed VLC based VR-arena and VR-headset design.

References

- M. Khan and J. Chakareski, “Visible light communication for next generation untethered virtual reality systems,” in IEEE ICC Optical Wireless Communications Workshop, 2019, https://arxiv.org/abs/1904.03735.

- J. Chakareski, “VR/AR immersive communication: Caching, edge computing, and transmission trade-offs,” in Proc. SIGCOMM Workshop on VR/AR Network. Los Angeles, CA, USA: ACM, Aug. 2017.

- X. Corbillon, A. Devlic, G. Simon, and J. Chakareski, “Optimal 360-degree video representation for viewport-adaptive streaming,” in Proc. Int’l Conf. Multimedia. ACM, Oct. 2017, pp. 934–951.